Agentic RE: Automating Reverse Engineering & Vulnerability Research with AI

Hands‑on labs bring together RE tools and agentic AI for scalable workflows.

📅 This training is running at multiple events in 2026:

- March 27 — Ringzer0 Countermeasure 2026 (Virtual)

- April 26 — DEFCON 2026 (Singapore)

- June 15 — REcon 2026 (Montreal, Canada)

- July 6–10 — CLEARSECLABS 2026 (Virtual)

- August 1–2 — Black Hat USA 2026 (Las Vegas, NV)

- August 3–4 — Black Hat USA 2026 (Las Vegas, NV)

- August 10–11 — DEFCON 2026 (Las Vegas, NV)

Browse all dates on the Upcoming Events calendar.

Overview

Track: AI-Native — Lead with AI orchestration from day one. Assumes RE comfort as a prerequisite. See Training Pathways for how this course relates to our Manual-First offerings.

This hands-on course introduces Agentic Workflows for reverse engineering and vulnerability research. You'll combine large language models (LLMs), the Model Context Protocol (MCP), and tools like Ghidra to design, configure, and orchestrate AI-powered systems that automate binary analysis across Windows, macOS, iOS, and Android.

By the end, you'll have built a working agentic workflow that analyzes binaries, surfaces potential vulnerabilities, validates findings, and triages results across platforms.

This is not a "prompt engineering for RE" course, and it is not a tour of tools you could learn from a README. You write real code: custom MCP servers that give LLMs access to Ghidra, optimization pipelines that make local models accurate, and agents that reason through binaries autonomously. The skills transfer to whatever models and frameworks come next.

Course Architecture

The course follows the evolution of how AI is actually used in reverse engineering — from where most people are today to where the field is heading:

Copy/Paste RE is where most practitioners start: decompile a function, paste it into ChatGPT, read the response, repeat. It works, but it doesn't scale and the LLM has no context beyond what you feed it.

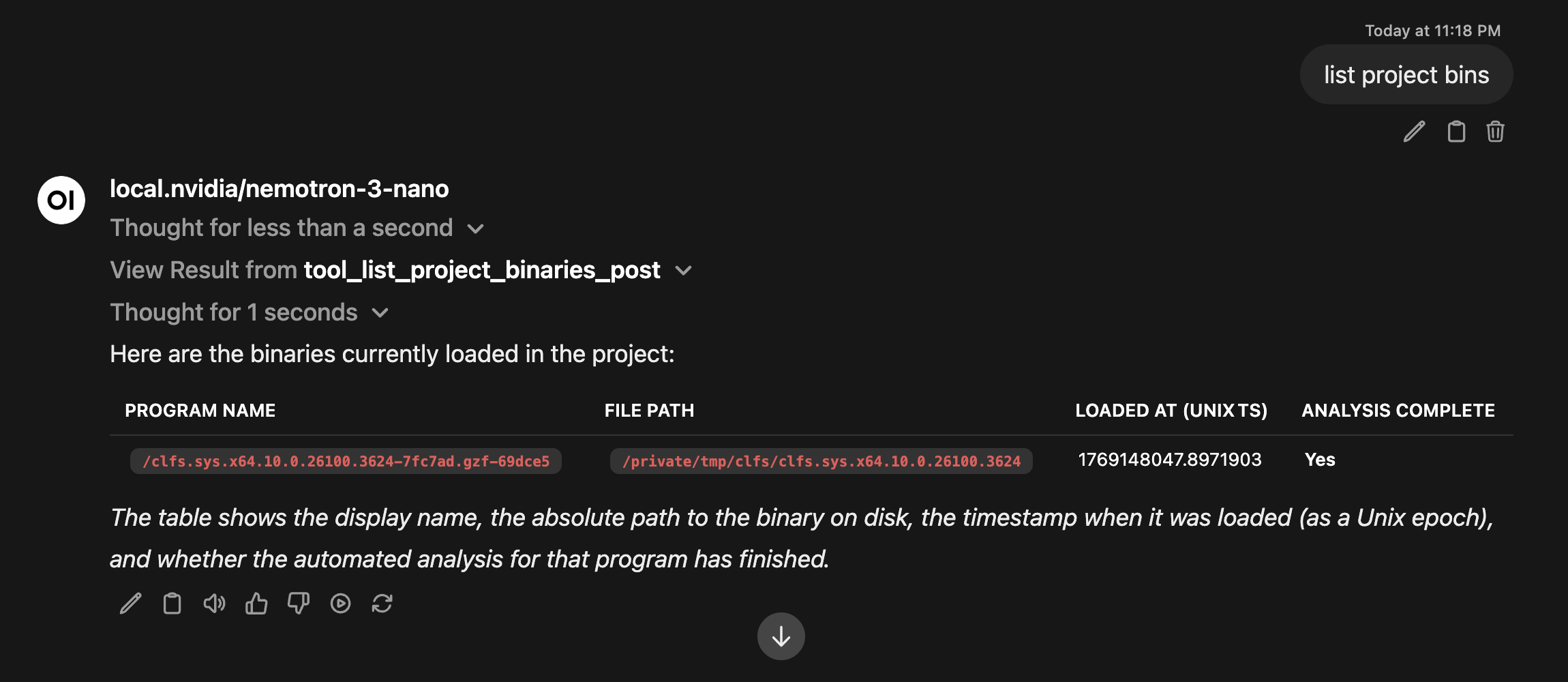

MCP RE eliminates the copy/paste loop. You connect LLMs directly to Ghidra through the Model Context Protocol — the model calls tools, pulls decompilation, and follows cross-references on its own. You go from manually feeding context to giving the LLM access to your entire RE environment.

Agentic RE takes it further. Instead of answering one question at a time, agents reason through multi-step analysis autonomously — decompile, trace, classify, validate — following the same process you would, just faster.

Coding Agents close the loop. You encode your RE expertise into Agent Skills and let coding agents generate, test, and refine analysis code on your behalf. The agent isn't just using your tools — it's building new ones.

Course Outline

Part 1 — Foundations of Agentic RE "AI as a computational layer"

You wire an LLM to your RE tools and get results on real binaries from day one. By the end of Part 1, you have a local AI stack running on your own hardware, connected to Ghidra through MCP, and you've used it to explain code you've never seen before.

- The Agentic Era: how LLMs transform reverse engineering and vulnerability research

- LLM basics: tokens, embeddings, quantization (overview)

- Model selection and hardware trade-offs

- Why local LLMs matter: privacy, reproducibility, and control

- Model Context Protocol (MCP): exposing RE tools to LLMs

- Local LLM stack setup and AI-assisted reverse engineering

An LLM calls Ghidra through MCP, retrieves loaded binaries, and explains the results — all from a single prompt.

Part 2 — Custom MCP Servers "AI as an environment you control"

You stop being a consumer of other people's tools and start building your own. You write custom MCP servers that expose your entire RE toolchain to LLMs.

- MCP server basics (Python + FastMCP)

- Custom Ghidra MCP: headless binary analysis, decompilation, and cross-references

- Static analysis through MCP: Semgrep and CodeQL integration

- Multi-binary analysis and semantic search

Part 3 — Adapting LLMs for RE/VR Tasks "AI as a programmable collaborator"

Local models are private and fast, but they're not as smart as frontier models out of the box. You learn to close that gap: context engineering, prompt optimization, and fine-tuning that take a small model from mediocre to accurate on your specific tasks.

- Context engineering and programming with LLMs

- Securing agentic workflows: prompt injection, data sanitization, MCP endpoint security

- Prompt optimization with DSPy (LabeledFewShot, MIPROv2, GEPA)

- Fine-tuning models for vulnerability class detection

Part 4 — Workflows, Orchestration & Capstone "AI as a workflow partner"

Everything comes together. You build autonomous agents that reason through binary analysis, call tools, validate their own findings, and adapt to different platforms.

- Agentic workflow orchestration with ReAct agents

- Lessons from DARPA AIxCC: making agents reliable

- Multi-platform workflows: Windows, macOS, Android, Linux

- Agent Skills and coding agents

- Capstone: Build an integrated RE + VR agentic workflow

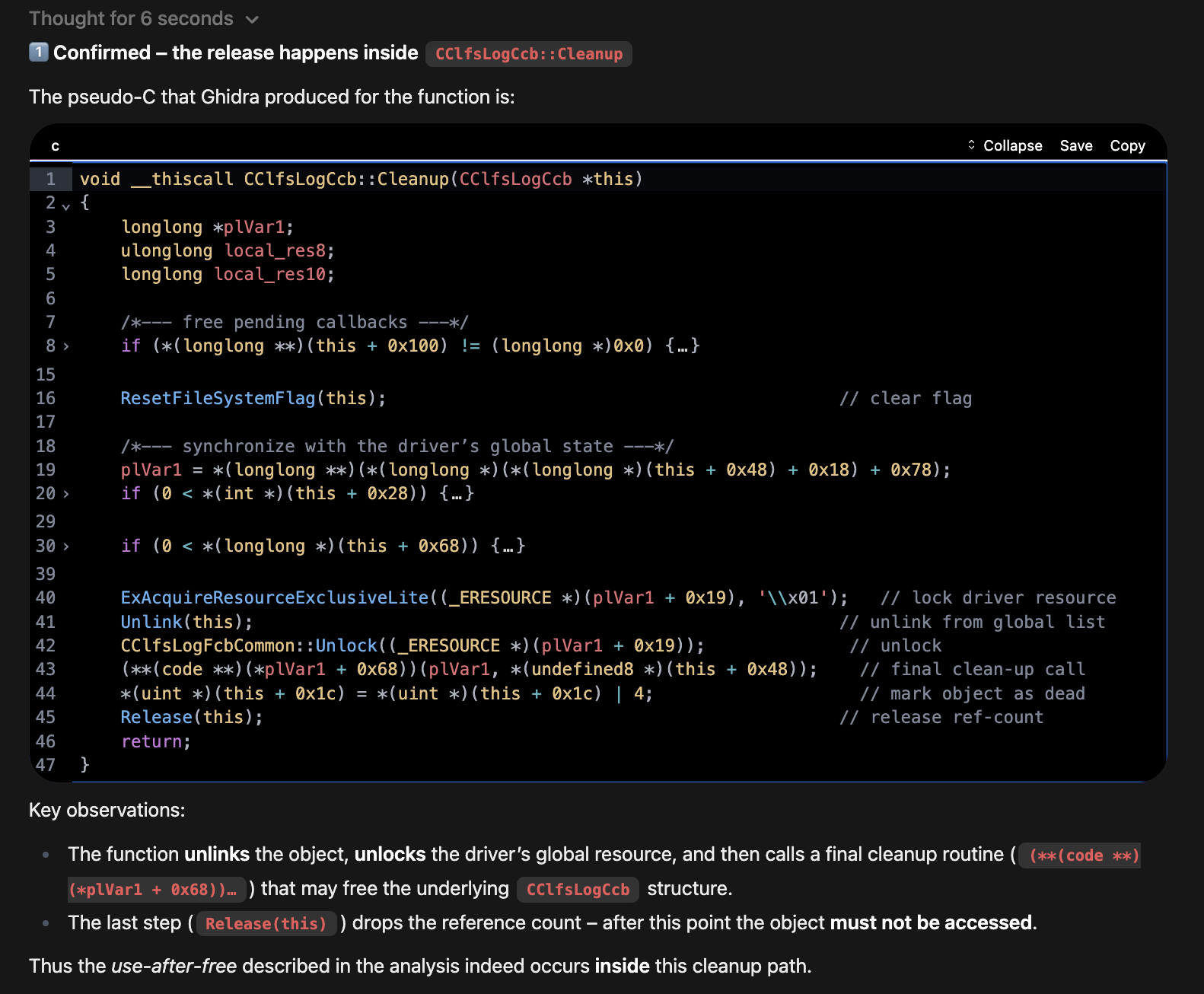

An agent autonomously decompiles a function, annotates the code, and identifies a use-after-free vulnerability — following the same process a researcher would.

What Clicks

Students consistently point to these moments as the ones that changed how they think about RE:

- The first MCP connection -- you ask an LLM a question about a binary and watch it call Ghidra, pull the decompilation, and explain what it found. That moment reframes what's possible.

- A small model doing real work -- you take a small model running on your laptop and use prompt optimization to make it perform like a model 10x its size on your specific task. That's when local models stop feeling like a compromise.

- An agent reasoning on its own -- you watch an autonomous agent decompile functions, trace cross-references, and build an assessment of a binary you've never seen, following the same process you would, just faster.

- Triaging vulnerability candidates for a dollar -- you run a batch analysis on real CVEs and see what took hours reduced to minutes at negligible cost.

What Students Say

"I feel like I am fairly well versed with AI, models, MCPs, etc. and I still learned a lot from this training. Every day I commented to my manager and my team how awesome this training was." -- B., senior security engineer

"The course felt more like an AI course than a strict RE course. The skills and concepts are clearly transferable to other related contexts. That breadth was a pleasant surprise." -- C., security researcher

"I didn't know DSPy at all and my first reaction was 'I'm never going to use this.' But now I might be a convert." -- Z., reverse engineer

"I used to feel overwhelmed and intimidated by how AI can be used for this work. I still am! But at least I feel massively less ignorant about where to even start now. I have specific ideas for my long running research project that I want to try out ASAP." -- M., security professional

"This course showed me how to bring AI into our workflow even without internet access. I'm going back to my team with a plan for local LLMs that leadership can actually get behind." -- D., RE team lead (restricted networks)

Technology Stack

| Category | Technologies |

|---|---|

| AI / LLM | Ollama, OpenWebUI, LM Studio, local models (Qwen, Llama), frontier APIs (OpenAI, Anthropic) |

| RE / VR | Ghidra, pyghidra, Semgrep, CodeQL, Tree-sitter, ghidriff |

| MCP | FastMCP (Python), MCPO proxy |

| Training | DSPy optimizers (MIPROv2, GEPA), QLoRA fine-tuning |

| UI / HUD | Streamlit, Chainlit, Mermaid diagrams |

| Dev | Python 3.11+ (primary), Docker, devcontainers, Jupyter notebooks |

Agentic RE Radar

Profile Insight: Most RE courses teach tools. Most AI courses teach theory. This one sits at Agentic AI (95) and Automation (85) -- you build working systems where LLMs and RE tools operate together on real binaries.

To find out which course is right for you, check out our training pathways.

Who Should Attend

- Reverse engineers who want to add AI-driven automation to their workflows

- Vulnerability researchers looking to speed up bug discovery and triage

- Security professionals who need private, reproducible AI stacks for sensitive work

- Developers and tool builders who want to extend MCP servers and plug AI into RE pipelines

- Applied AI practitioners ready to move past prompt-hacking into real orchestration and workflow design

If you've wanted your RE tools to do more than assist, to actually reason, act, and iterate on their own, this is that course.

Instructor

John McIntosh

John McIntosh (@clearbluejar) is a security researcher at ClearSecLabs specializing in reverse engineering and offensive security. He's known for his expertise in binary analysis, patch diffing, and vulnerability discovery, and has developed multiple open-source tools for vulnerability research.

With over a decade of experience and a strong presence at international security conferences, John brings deep technical insight and a passion for collaborative learning to every course.

Prerequisites

To get the most out of this training, participants should have:

- Intermediate reverse engineering experience (familiarity with Ghidra, IDA, or similar tools)

- Basic vulnerability research knowledge (understanding of common bug classes and analysis workflows)

- Comfort with scripting in Python (used for MCP servers, orchestration, and workflow glue)

- Familiarity with Linux or macOS command‑line environments for stack setup and automation

No prior LLM or AI framework experience needed. We cover the fundamentals before anything advanced.

System Requirements

AI Hardware:

- A machine capable of running at least an 8B model (e.g., Qwen3 or Llama)

- Recommended: modern GPU (RTX 3060+ or Apple M-series) with 16GB+ RAM

- Course inference infrastructure is provided during the course -- no local GPU required. Local setup is optional for students who want to run models on their own hardware. Free tiers of frontier model APIs are an alternative for the bring-your-own-model exercises.

Software:

- Python 3.11+

- Docker (for OpenWebUI and Ollama)

- git and a Linux-style command-line environment with administrator privileges

Practical Takeaways

What you take home:

- A working local RE+LLM stack you own and control, no subscriptions required

- The ability to build MCP servers that wrap any RE tool you already use, not just the ones covered in class

- Prompt optimization and fine-tuning skills that make small local models perform on your specific tasks

- Reusable workflow patterns for binary analysis, vulnerability discovery, and results validation across platforms

- Agent Skills that encode your RE expertise into reproducible, shareable definitions

- A capstone workflow that integrates everything into one pipeline, and the process to build the next one

The capstone has two paths (or do both): an RE path focused on binary analysis and explanation, and a VR path focused on vulnerability discovery and triage. Both produce a working agentic pipeline.

What Students Receive

- Course slides and training materials

- Preconfigured devcontainers with all labs and tools

- Access to course CTF server during and beyond the course

- Course inference infrastructure (no local GPU required)

- Resources for continued learning

- Instructor support via Discord during and after the course

Upcoming Courses

| Date | Event | Location | |

|---|---|---|---|

| March 27, 2026 | Ringzer0 Countermeasure 2026 | Virtual | Register → |

| April 26, 2026 | DEFCON 2026 | Singapore | Register → |

| June 15, 2026 | REcon 2026 | Montreal, Canada | Register → |

| July 6–10, 2026 | CLEARSECLABS 2026 | Virtual | Register → |

| August 1–2, 2026 | Black Hat USA 2026 | Las Vegas, NV | Register → |

| August 3–4, 2026 | Black Hat USA 2026 | Las Vegas, NV | Register → |

| August 10–11, 2026 | DEFCON 2026 | Las Vegas, NV | Register → |

📅 Browse all dates on the Upcoming Events calendar.